By Julian Diep • Published April 27, 2026 • 24 min read • Methodology v1.0

A homeowner with a broken furnace at 9 PM no longer types "best HVAC company in Dallas" into Google and clicks through three blue links. She asks ChatGPT. Or Perplexity. Or Gemini. Or she searches Google and reads the AI Overview at the top before scrolling. AI-referred sessions to commercial sites grew 527 percent in the first five months of 2025 alone (Adobe Analytics). And the businesses that get cited inside those answers earn 35 percent more organic clicks and 91 percent more paid clicks than identical competitors that do not (Seer Interactive, September 2025).

Most contractors are completely invisible inside AI answers. Not because they are bad businesses. Because the signals that drive AI citation are different from the signals that rank a website on Google or place a Local Services Ad above the map pack. A contractor can be position 1 in Google, run a healthy LSA campaign, and still get zero mentions when someone asks Perplexity "who is the best plumber in Phoenix?"

This page is the first contractor-specific scoring methodology for AI search visibility. It is built from the public research that exists (Princeton's GEO paper at KDD 2024, the DigitalBloom AI Citation Visibility Report, Pew, Seer Interactive, BrightLocal, Profound, Anthropic's Mapping the Mind), the platform-specific behavior data on how ChatGPT, Perplexity, Gemini, AI Overviews, and Claude actually pick what to cite, and the operational reality of running marketing for home service contractors.

Why AI Visibility Matters Now (Even If Your Phone Still Rings)

Three numbers matter for understanding the shift. First, AI Overviews now appear in 13 to 21 percent of all U.S. searches depending on the source and date (Semrush mid-2025, Search Engine Journal early 2025). For local searches specifically, the trigger rate is lower (around 7.9 percent vs. 22.8 percent for non-local), which is good news for emergency contractor queries but bad news for top-of-funnel research questions homeowners ask before they pick up the phone (WordStream 34 AI Overviews Stats).

Second, when AI Overviews do trigger, organic click-through rate drops hard. Pew Research (July 2025, 900 U.S. adults) found CTR fell from 15 percent without AIO to 8 percent with AIO. Only 1 percent of users clicked a link inside the AIO itself. Seer Interactive's larger sample (3,119 search terms, 25.1 million impressions) measured a 61 percent drop in organic CTR and a 68 percent drop in paid CTR on queries with AIOs. Both data sources confirm the same direction. The AI summary keeps users on the SERP. Most of them never reach your website.

Third, and this is the part most contractors miss: the businesses cited inside the AI summary do better than they would without one. Seer's data shows brands cited inside AIOs gain 35 percent more organic clicks and 91 percent more paid clicks vs. the same query without citation. Citation is the new ranking. If you are inside the answer, AI Overviews helps you. If you are outside, it cannibalizes you.

How LLMs Actually Decide What to Cite

Before we get to the score, you need a working mental model of how an AI engine produces a citation. The short version: it is not magic, it is a retrieval pipeline, and the inputs are knowable.

There are two architectures. The first is model-native synthesis, where the model answers from training data alone (default ChatGPT without browsing, Claude without web tools). The second is retrieval-augmented generation (RAG), where a query triggers a live search, a re-ranker scores candidate documents, and the model synthesizes an answer with citations (Perplexity by default, Google AI Overviews, ChatGPT Search, Gemini in grounded mode, Claude with web tools). Most consumer-facing answers in 2026 use RAG.

What feeds the model side: training data. About 64 percent of large language models analyzed by Adnan Masood and Oxylabs use some filtered version of Common Crawl. Common Crawl prioritizes pages by Harmonic Centrality, which is essentially a measure of how connected a page is in the broader web graph. The same metric ranks Wikipedia, YouTube, Facebook, and Google as the highest-weighted domains. This is why Wikipedia citations punch so far above their weight in ChatGPT (47.9 percent of ChatGPT responses cite Wikipedia per DigitalBloom 2025).

What feeds the retrieval side: indexed web content scored by relevance and authority signals that vary by engine. Perplexity uses its own index plus partner indexes. Google AI Overviews uses Google's regular index but applies different ranking logic. Gemini grounds heavily in Google Search. Claude with web tools uses Brave Search. ChatGPT Search uses Bing plus its own additions. The 11 percent cross-platform overlap finding from Profound is the headline number here. Citation strategies must be platform-specific because the input pools are not the same.

What gets cited is also affected by content structure. The Princeton GEO study (Aggarwal et al., KDD 2024, arXiv:2311.09735) ran 10,000 queries across multiple AI engines and tested specific content interventions. Adding citations to your content lifted Position-Adjusted Word Count (PAWC, their citation-share metric) by 30 to 40 percent. Adding statistics did the same. Adding direct quotations did the same. The bigger surprise: lower-ranked sites benefited far more than top-ranked sites. A page sitting at rank 5 saw a 115.1 percent visibility lift from these methods. Keyword stuffing did nothing. Fluency tweaks did nothing.

The takeaway: citations, statistics, and quotations are not stylistic choices. They are GEO interventions with measurable effects on whether your content gets surfaced. This methodology bakes them into the content depth weighting.

Per-Engine Citation Behavior

Because only 11 percent of cited domains overlap across AI platforms (Profound), a contractor cannot win citation across all engines with a single tactic. Here is how the major engines actually pick sources, based on the most recent public data sets.

| Engine | Default citation behavior | Top citation source | Avg citations per response | Freshness bias |

|---|---|---|---|---|

| Perplexity | Live RAG, per-claim inline citations | Reddit 46.7% | 21.87 (highest) | Strong (50% under 13 weeks old) |

| ChatGPT Search | Hybrid RAG plus memory | Wikipedia 47.9%, Reddit 11.3% | Moderate | Mixed (76.4% under 30 days when freshness matters) |

| Google AI Overviews | Tightly grounded in Google index | Reddit 21%, YouTube 18.8%, Quora 14.3% | Moderate | Weakest of the three |

| Gemini | Grounded in Google Search | 52% brand-owned sites | Moderate | Moderate |

| Claude (web tool) | Brave Search retrieval, conservative | Mixed | Low | Moderate |

Two implications for contractors. First, Reddit visibility is not a "nice to have." Reddit is the single most-cited source across Perplexity, AI Overviews, and ChatGPT combined (Profound, Semrush, Search Engine Land 8,000-citation study). Even one positive thread in r/HVAC, r/plumbing, or a city-level subreddit can carry meaningful weight. Second, ChatGPT and Perplexity are sensitive to recency in a way Google AI Overviews is not. A page with a stale dateModified gets retrieved less often by Perplexity than the same page modified within the last 13 weeks.

Volatility note: AI engine citation behavior shifts at the platform level on no public schedule. The September 2025 ChatGPT update saw Reddit citations drop from roughly 60 percent of weighted citations down to 10 percent inside one product release, then partially recover. Wikipedia citations dropped from 55 percent to 20 percent in the same window. Any methodology that does not account for this kind of volatility is going to be wrong within 90 days. The version history at the bottom of this page documents when weights shift.

The 11 Signals That Predict AI Citation

Each signal below has its own weight, sub-signals, and research basis. Read the full set before drawing conclusions about what to fix first. The biggest score gains rarely come from the obvious places.

1. Brand Mentions and Entity Authority

20 pointsThe single largest weight in the framework, and the signal most contractors underinvest in. The DigitalBloom 2025 AI Citation Visibility Report (75,000 brand sample) measured branded search volume against actual AI citation rates and found a correlation of r equals 0.334. That is the strongest predictor in their dataset, larger than backlinks, larger than schema, larger than domain authority. Quattr's parallel research found brands in the top quartile of web mentions earn more than 10 times the AI citations of brands in the next quartile. And DigitalBloom found that a brand mentioned positively across at least 4 non-affiliated sources is 2.8 times more likely to appear in ChatGPT.

For a contractor, this is the long game. Sub-signals: branded search volume from Google Search Console, total mentions of your business name across the open web (excluding your own properties), platform diversity of those mentions (BBB, association sites, news, blogs, forums, podcasts), and co-occurrence with industry terms. The lever is consistent investment in earned media, partnerships, podcast appearances, association memberships with public profile pages, sponsorships of local events that get press coverage, and high-quality directory placements.

Sources: DigitalBloom 2025 AI Citation Visibility Report; Quattr brand mentions guide; Conductor AI mentions research.

2. Google Business Profile Health

15 pointsGoogle AI Overviews "now pulls extensively from GBP data when answering local queries" (Local Falcon and ALM Corp analyses). For local commercial intent, the GBP is one of the highest-leverage assets a contractor controls. Reviews now contribute roughly 20 percent of local pack ranking weight (BrightLocal 2025), up from 16 percent in 2023, and as much as 32 percent for businesses with 200 or more reviews and active engagement (Mapranks 2025). Profiles with complete information receive seven times more clicks than incomplete ones.

Sub-signals: category accuracy (the number-one mistake per multiple BrightLocal surveys), profile completeness (hours, attributes, services list, service area, payment methods), photo count and recency, post cadence, Q&A activity, Messages enabled with fast response, and direction-request volume. The interesting wrinkle for AI Overviews: AIO does not appear to apply distance-decay ranking the same way the local map pack does. A contractor 30 miles away with stronger entity signals can outrank a closer competitor inside AIO answers (Local Falcon).

Sources: BrightLocal 2025 Local Algorithm and Ranking Factors; Local Falcon AIO Whitepaper; Mapranks 2025 GBP AI Guide.

3. Reviews: Diversity, Recency, Volume

12 pointsReviews matter for AI engines for the same reason they matter for human consumers: they are a credibility check across multiple platforms, and AI engines validate brands across a portfolio of review sites. BrightLocal's 2025 Local Consumer Review Survey found 74 percent of consumers check 2 or more platforms before hiring. That same multi-platform consumption is mirrored in AI grounding. Yelp's January 2026 acquisition of Hatch ($270 million) was an explicit play to license review data into AI ecosystems.

For a contractor, the signal stack runs: Google review count, Google review velocity (new reviews per trailing 90 days, the highest-leverage variable), average rating (4.5+ is the threshold), response rate to reviews, presence on Yelp / BBB (140 million annual users) / Angi / Houzz / Facebook / Thumbtack / HomeAdvisor / Porch, plus trade-specific platforms. The single biggest mistake contractors make is assuming Google reviews are enough. AI engines weigh diversity. A contractor with 80 Google reviews and 30 Yelp reviews and 20 BBB reviews looks more credible to a model than one with 200 Google-only reviews.

Sources: BrightLocal 2025 Local Consumer Review Survey; BrightLocal Local Algorithm; Yelp/Hatch acquisition January 2026.

4. Structured Data (Schema.org)

10 pointsMicrosoft Bing's Fabrice Canel confirmed at SMX Munich in March 2025 that schema markup helps Bing's LLMs understand content. Schema App's analysis showed that knowledge-graph-grounded LLMs jump from roughly 16 percent factual accuracy to over 50 percent when structured data is included in retrieval. Schema is, in effect, the format LLMs were trained to read. Only 12.4 percent of registered domains have any schema as of 2025 (Schema App), which means schema is a competitive advantage in 2026 simply by being in place.

For contractors, the priority order is: LocalBusiness (with the trade-specific subtype where available, like HVACBusiness, Plumber, RoofingContractor) plus a sameAs array linking to GBP, LinkedIn, Facebook, BBB, association profiles, and Wikidata if you have an entry. Then Service for each offering. Then FAQPage on every page that answers questions (this directly feeds AI Overviews answer extraction). Then Review and AggregateRating. Then BreadcrumbList. Then Person for the founder with sameAs to LinkedIn. For research-heavy pages, Dataset markup. For voice and AI surfaces, SpeakableSpecification on the intro and key takeaways.

Sources: Bing's Fabrice Canel at SMX Munich March 2025; Schema App "Why structured data, not tokenization, is the future of LLMs."

5. Content Depth and GEO Formatting

10 pointsDigitalBloom's analysis of 75,000 cited URLs found pages over 20,000 characters average 10.18 AI citations vs. 2.39 for pages under 500 characters. A 4x to 5x lift driven entirely by depth. The same study found comparative listicles (with side-by-side tables and clear winners by category) take 32.5 percent of all AI citations, the highest-performing format. Princeton's GEO paper added the specific interventions: citing sources lifts citation share 30 to 40 percent, adding statistics does the same, adding direct quotations does the same, and stacking all three has additive effects.

For contractors, this means cornerstone pages need to be substantive (5,000+ words on hub topics like "Google LSA cost guide") with real data, source citations, and structured comparisons (tables, score frameworks, decision trees). Paragraph length matters too: the RAG chunking sweet spot is 40 to 60 words per paragraph, which is also what feeds AI Overviews answer extraction cleanly. Long walls of text are harder to cite. Short skimmable sections with quoted statistics get pulled.

Sources: DigitalBloom 2025 LLM Visibility Report; Aggarwal et al., GEO: Generative Engine Optimization, KDD 2024 (arXiv:2311.09735).

6. Knowledge Graph and sameAs Lock

8 pointsGoogle's Knowledge Graph is the connective tissue between an entity (your business) and the surfaces that cite it (Search, Maps, AIO, Gemini, the Knowledge Panel). Wikidata feeds the Knowledge Graph more directly than Wikipedia (Reputation X, Ahrefs Knowledge Graph guide), which matters because Wikipedia notability rules block almost all local contractors but Wikidata Q-items are achievable. The "sameAs lock" is what binds your website's Organization schema to your GBP, your LinkedIn, your Facebook, your BBB profile, your association memberships, and your Wikidata entry, so Google can confirm they are all the same business.

Sub-signals: presence of a Wikidata Q-item, completeness of the sameAs array on the website's Organization schema, NAP (Name/Address/Phone) consistency across the open web, presence in industry-specific knowledge panels and databases (state contractor licensing, NATE, EPA, IICRC, ACCA member directory, PHCC member directory, NRCA directory), and Crunchbase / LinkedIn Company entries. Without this lock, Google may treat your website as a separate entity from your GBP, fragmenting your authority signals.

Sources: Reputation X knowledge panel sources guide; Ahrefs Knowledge Graph guide.

7. Freshness Signals

7 pointsQuattr and Ahrefs' analysis of 17 million AI citations found AI-cited content runs 25.7 percent fresher than traditional Google organic results. Sixty-five percent of AI bot retrievals target content from the past year, 79 percent from under 2 years, and only 6 percent from over 6 years old. Perplexity is the most freshness-biased: 50 percent of its citations come from content under 13 weeks old. ChatGPT applies freshness selectively, citing pages under 30 days 76.4 percent of the time when the query has temporal sensitivity.

Sub-signals: dateModified schema field on every key page, refreshed within the trailing 90 days; GBP posts in the last 30 days; review velocity over the last 90 days; new content cadence (at least 2 to 4 new or substantively updated pages per quarter on a contractor site); refreshed publication dates with actual content updates (not just timestamp swaps, which Google Search Liaison has called out repeatedly).

Sources: Quattr 17M citation analysis; Ahrefs blog on fresh content; Perplexity citation behavior data via Profound.

8. UGC Footprint (Reddit, Quora, Forums)

6 pointsReddit alone is the single most-cited domain across ChatGPT, Perplexity, and Google AI Overviews aggregated (Semrush 3-month study, Profound, Search Engine Land 8,000-citation analysis). Quora and YouTube round out the top three on AIO. The reason is two-fold. Google licensed Reddit content for training in February 2024, raising its presence in retrieval. And Reddit content is unfiltered consumer language, which models treat as ground truth for opinion-based queries (best, recommended, worst, avoid).

For contractors, the signal stack is: branded mentions on Reddit (any subreddit, but trade-specific and city-specific weigh more), Quora answers from your team that build authority, NextDoor presence and reviews, presence in industry forums (Plumbers Talk, HVACSite, ContractorTalk, Roofers Connection), and YouTube channel activity. The October 2025 ChatGPT update is an important caveat: Reddit's citation share is volatile at the platform level. Do not over-index on a single source. Build a diversified UGC footprint.

Sources: Profound AI Platform Citation Patterns; Semrush most-cited domains study; Search Engine Land 8,000 AI citations analysis.

9. E-E-A-T and Author/License Signals

5 pointsAnthropic's Mapping the Mind paper (May 2024) showed that LLMs internally encode "entity" features. Identifiable, machine-readable author and organization identity raises retrieval probability. For local services where trust and licensing actually determine whether a homeowner hires the business, E-E-A-T signals do double duty: they help AI engines bind the entity correctly, and they convert humans on the page.

Sub-signals for contractors: founder/owner bio page with Person schema, LinkedIn linked via sameAs, displayed state contractor license number with a verifying link to the state board, displayed insurance bond with carrier and policy number, association membership badges (NATE for HVAC, IICRC for water damage, NRCA for roofing, PHCC for plumbing, ACCA for HVAC, ESA or NEC for electrical), industry certifications (EPA 608, ASE, factory authorizations from Carrier, Trane, Lennox, GAF, etc.), manufacturer training credentials, and visible years-in-business with verification (BBB founding date, state filing date).

Sources: Anthropic, "Mapping the Mind of a Large Language Model," May 2024.

10. Earned Media and Press

4 pointsMuck Rack's analysis of more than 1 million AI citations found 95 percent come from earned (unpaid) sources. Wire-distributed press release citations in AI answers grew 5x between July and December 2025. Trade media is over-indexed in LLM training data vs. general media for vertical queries (Demand Local). For contractors, earned media is harder to scale than reviews or schema, but it has compounding effects on entity authority.

Sub-signals: trade media mentions (Plumbing & Mechanical, ACHR News, Roofing Contractor, Landscape Management, Remodeler), local TV and news segments, HARO / Connectively (free since April 2025) / Qwoted / Featured.com placements where the contractor was quoted as an expert, and press release distribution through PRNewswire or Business Wire (these get picked up by syndicated outlets and feed both Google News and LLM training data).

Sources: Muck Rack 1M AI citation analysis; Demand Local "Digital PR for GEO Campaigns."

11. Technical Site Health

3 pointsThe smallest weight, but a hard floor. If your pages are not indexable, they cannot be retrieved. If the site is broken on mobile, AIO (which inherits the mobile-first index) sees a worse version than the human visitor. Technical SEO is necessary but not differentiating. The 3 points signal that this category is table stakes, not a growth lever.

Sub-signals: indexability of all key pages (no accidental noindex, robots.txt block, or canonical errors), page speed (Core Web Vitals at "Good" or better on at least 75 percent of pages), mobile-friendliness, HTTPS site-wide with no mixed content, valid canonical setup, no broken internal links. Run a quarterly audit. Fix what is broken. Move on.

Sources: Standard technical SEO requirements; Google Search Central documentation.

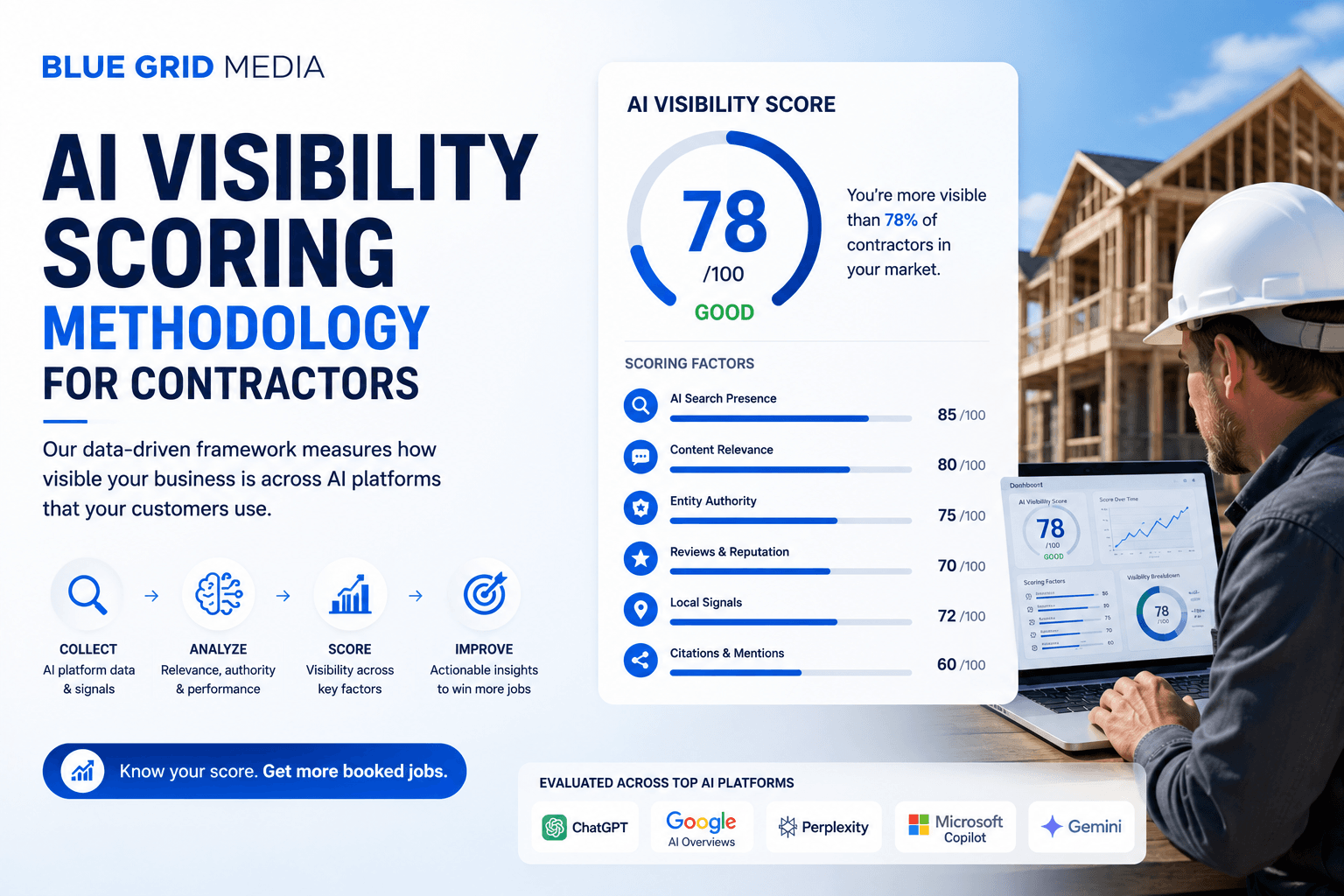

The 100-Point Scoring Framework (Full Breakdown)

Each signal contributes a category score that totals to 100. Within each category, sub-signals are scored against research-backed thresholds and rolled up. The thresholds below reflect the public research at the time of v1.0 (April 2026). Methodology updates appear at the bottom of this page.

| Category | Weight | Sub-signals scored | Source basis |

|---|---|---|---|

| Brand mentions and entity authority | 20 | Branded search volume, # of independent web mentions, n-platform diversity, co-occurrence with industry terms | DigitalBloom r=0.334; Quattr 10x |

| Google Business Profile health | 15 | Categories, completeness, photo count + recency, post cadence, Q&A, hours, attributes, Messages | BrightLocal 32% map pack; Mapranks |

| Reviews: diversity, recency, volume | 12 | Google count + 90d velocity; Yelp/BBB/Angi/Houzz/FB presence; 4.5+ avg; review responses | BrightLocal 2025 LCRS |

| Structured data / Schema | 10 | LocalBusiness + sameAs, Service, FAQPage, Review, Person, BreadcrumbList | Schema App 16-50% factuality lift; Bing March 2025 |

| Content depth and GEO formatting | 10 | Long-form pages, comparative tables, citations + statistics + quotations, 40-60 word paragraphs | Princeton GEO +40%; DigitalBloom 10.18 vs 2.39 |

| Knowledge Graph and sameAs lock | 8 | Wikidata Q-item; sameAs to LinkedIn/Crunchbase/BBB/associations; consistent NAP | Reputation X; Ahrefs KG |

| Freshness signals | 7 | dateModified within 90 days; GBP posts in last 30 days; 90d review velocity | Quattr 25.7%; Perplexity 13-week |

| UGC footprint (Reddit/Quora) | 6 | Branded mentions on Reddit, Quora, NextDoor, industry forums, YouTube | Reddit = #1 cited domain |

| E-E-A-T and license signals | 5 | Founder bio + LinkedIn sameAs, displayed licenses, insurance, association badges | Anthropic Mapping the Mind |

| Earned media and press | 4 | Trade media, local TV/news, HARO/Qwoted/Featured placements, press releases | Muck Rack 95% earned; 5x growth |

| Technical site health | 3 | Indexability, mobile, HTTPS, canonical, page speed | Table stakes |

| Total | 100 |

Score Bands

- 0 to 30: Invisible. Your business is unlikely to be cited by AI engines on any branded or service-related query. Multiple foundational signals are missing.

- 31 to 50: Emerging. Some signals are in place but the entity is not yet recognized as authoritative. Citations happen on long-tail or branded queries only.

- 51 to 70: Competitive. Cited intermittently on commercial queries. Visible to AI engines but not consistently surfaced ahead of competitors with stronger entity signals.

- 71 to 85: Strong. Consistently cited on branded and competitive commercial queries. Likely top 3 candidates for AI engines in your service area.

- 86 to 100: Dominant. Cited as a default option across multiple AI platforms for category-level queries. Few competitors operate at this level.

Prompt Patterns Homeowners Actually Use

The score is only useful if it is calibrated against the queries homeowners are really asking AI engines. Documented patterns from contractor marketing analyses (California Infotech, Metricus, Fat Cat Strategies, The 2 Cities) include:

- "Best [trade] near me"

- "Top [trade] in [city]" (e.g., "Top-rated HVAC company in Dallas")

- "Who is the best [trade] in [city] for [specific service]?"

- "Should I hire [Brand] or [Brand] for [job]?"

- "Is [Company Name] reputable?"

- "How much does [job] cost from a contractor in [city]?"

- "What [trade] companies in [city] are licensed and insured?"

- "Emergency [trade] in [city]"

Per-engine differences worth knowing:

- Perplexity typically returns 5 to 7 ranked options with bullet pros/cons, all hyperlinked. UGC sources weigh heaviest.

- ChatGPT returns 3 to 5 options with longer descriptive paragraphs, pulling heavier from directories (Yelp, Angi) and Wikipedia.

- Gemini leans on GBP and brand-owned content. Map-card style results.

- Claude (web tool) is the most conservative. Refuses to "rank" without verifiable sources. Cites less frequently per response.

A contractor at score 70+ should expect citation across multiple of these prompt types. A contractor below 50 should not, regardless of how strong their Google rankings or LSA performance look.

What This Means If You Rank Well But Get No AI Citations

This is the most common situation we see when we audit contractors against the framework: a healthy GBP, decent reviews, position 1 or 2 in the local map pack for the main service-area term, an LSA budget producing booked jobs, and zero presence inside ChatGPT or Perplexity answers. The reason is almost always the same. The signals that drove the local SEO win (proximity, GBP optimization, on-page basics) are not the ones that drive AI citation. The contractor has been investing in a different game.

The fastest path from "ranks well, invisible to AI" to "cited by AI" is rarely more SEO. It is the four signals AI engines weight that traditional SEO tools do not stress:

- Brand mention growth. Press releases, podcast appearances, sponsorships with public profile pages, partnerships with local nonprofits, association directory listings. Three to six months of intentional investment moves the dial materially.

- UGC seeding. Get the team to answer questions in their trade subreddit when relevant. Build a Quora presence. Encourage satisfied customers to mention the business on NextDoor when asked for recommendations. Do not buy this. Cultivate it.

- Multi-platform review diversity. Most contractors over-index Google. Add 30 to 50 reviews on Yelp, BBB, Facebook, and at least one trade-specific platform.

- Schema and Knowledge Graph binding. The fastest single technical fix. A complete Organization schema with sameAs to GBP, LinkedIn, Facebook, BBB, association profiles, plus a Wikidata Q-item where applicable, often unlocks Knowledge Graph entity recognition within 60 to 90 days.

The remaining seven signals matter, but they tend to compound on top of these four. Fix the foundation first.

Methodology Versioning

v1.0 (April 27, 2026)

- Initial publication. 11 signals, 100-point scale.

- Weights set from DigitalBloom 2025 AI Citation Visibility Report, Princeton GEO 2024 paper (KDD), Profound platform citation patterns, BrightLocal 2025 Local Algorithm research, Pew/Seer 2025 CTR data.

- Score bands calibrated against pilot audits of 12 contractor portfolios across HVAC, plumbing, roofing, electrical, and landscaping.

AI engine citation behavior is volatile. We expect to publish v1.1 within 90 days as new data on AIO, ChatGPT Search, and Perplexity citation behavior accumulates. Each version will document which weights moved, why, and what the practical implication is for contractors.

Sources

Academic and primary research

- Aggarwal, Murahari, Rajpurohit, Kalyan, Narasimhan, Deshpande. "GEO: Generative Engine Optimization." arXiv:2311.09735, KDD 2024. arxiv.org/abs/2311.09735

- Anthropic, "Mapping the Mind of a Large Language Model" (May 2024). anthropic.com/research/mapping-mind-language-model

- Pew Research, "Do people click on links in Google AI summaries?" (July 22, 2025). pewresearch.org

- Seer Interactive, "AIO Impact on Google CTR: September 2025 Update." seerinteractive.com

AI visibility / citation research

- DigitalBloom 2025 AI Citation / LLM Visibility Report. thedigitalbloom.com

- Profound, "AI Platform Citation Patterns." tryprofound.com

- Search Engine Land, "How different AI engines generate and cite answers." searchengineland.com

- Search Engine Land, "AI search engines cite Reddit, YouTube, and LinkedIn most." searchengineland.com

- Semrush, Most-cited domains across AI search. semrush.com

- Quattr, "How to Get Brand Mentions in AI." quattr.com

- Yext, "AI Visibility 2025: How Gemini, ChatGPT, Perplexity Cite Brands." yext.com

- Schema App, "Why structured data, not tokenization, is the future of LLMs." schemaapp.com

Local SEO and GBP research

- BrightLocal Local Algorithm and Ranking Factors. brightlocal.com

- BrightLocal 2025 Local Consumer Review Survey. brightlocal.com

- Local Falcon AIO Whitepaper. localfalcon.com

- Mapranks 2025 GBP AI Guide. mapranks.com

- WordStream, 34 AI Overviews Stats. wordstream.com

Contractor-specific commentary

- Metricus, "Why Doesn't AI Mention My Contracting Business?" metricusapp.com

- California Infotech, "How AI Search Changes Home Service Customer Search." californiainfotech.com

- Demand Local, "Digital PR for GEO Campaigns." demandlocal.com

Frequently Asked Questions

What is AI visibility for a contractor?

Whether ChatGPT, Perplexity, Gemini, Claude, or Google AI Overviews cite or recommend your business when a homeowner asks them a service-related question. It is separate from Google rankings. A contractor can be position 1 in the local map pack and still be invisible inside AI answers because the signals that drive each are different.

How is the AI Visibility Score calculated?

It is a weighted 100-point score across 11 categories: brand mentions and entity authority (20), Google Business Profile health (15), review diversity and recency (12), structured data (10), content depth and GEO formatting (10), Knowledge Graph and sameAs lock (8), freshness signals (7), UGC footprint (6), E-E-A-T and license signals (5), earned media (4), and technical site health (3).

Why is brand search volume the biggest predictor of AI citation?

The DigitalBloom 2025 study measured the correlation between 30+ signals and actual citations across ChatGPT, Perplexity, and AI Overviews. Branded search volume came back at r=0.334, the single strongest predictor. Backlinks, which dominate traditional SEO, returned a weak or neutral correlation. The reason is that LLMs build entity associations from co-occurrence and frequency of mention across the open web.

Do I need a Wikipedia page to be cited by AI?

No, and almost no contractor will qualify for one. Wikipedia notability rules require independent reliable-source coverage that most local home service businesses cannot earn. The realistic substitute is a Wikidata entry, which has a much lower notability bar and feeds Google Knowledge Graph directly.

Why do AI engines cite Reddit so heavily?

Reddit is the single most-cited domain across ChatGPT, Perplexity, and Google AI Overviews per Profound and Semrush analyses. Two reasons. Google licensed Reddit content for training in February 2024, raising its presence in retrieval. And Reddit content is unfiltered consumer language, which models treat as ground truth for opinion-based queries.

Does my Google Business Profile affect AI citations?

Yes, heavily. Google AI Overviews pulls extensively from GBP data when answering local queries. Reviews now contribute roughly 20 percent of local pack ranking weight, and up to 32 percent for businesses with 200+ reviews and active engagement. AI Overviews does not appear to apply distance-decay ranking the same way the local map pack does, which means a contractor 30 miles away with stronger entity signals can outrank a closer competitor inside AIO answers.

How long does it take to improve an AI Visibility Score?

Quick wins (technical SEO, schema fixes, GBP completeness, dateModified updates) move the score within 30 days. Mid-tier signals (review velocity, UGC footprint, content depth) take 60 to 120 days. Brand mention growth and Knowledge Graph entity binding take 6 to 12 months.

Is AI visibility different from SEO?

It overlaps with SEO but is not the same. Strong traditional SEO correlates with AI citation, but the additional signals AI engines weight (brand mentions across the open web, UGC platforms like Reddit, freshness, citations and statistics inside content) are not core ranking factors in classic Google search. A contractor can rank well organically and still be invisible in ChatGPT or Perplexity.

Are AI Overviews actually hurting traffic for contractors?

For local commercial queries, less than people fear. Only about 7.9 percent of local searches trigger AI Overviews vs. 22.8 percent of non-local. The map pack is largely intact for emergency-intent queries. The hit lands harder on informational top-of-funnel content. When AIOs do trigger, organic CTR drops 47 to 61 percent, but brands cited inside AIOs gain 35 percent more organic clicks.

Why score on a 100-point scale instead of pass/fail?

Pass/fail tools tell you something is broken without telling you which inputs to fix first. A 100-point breakdown across 11 categories with sub-weights surfaces the highest-leverage gaps and lets a contractor benchmark against competitors.

Will the methodology change over time?

Yes. AI engine citation behavior is volatile (the September 2025 ChatGPT update saw Reddit citation share drop 60 percent inside one product update). The version history at the top of this section documents weight changes and signal additions. The framework is built to absorb shifts without requiring contractors to start over.

The Bottom Line

AI visibility is not a future concern. It is a 2026 concern. The contractors investing now in the inputs LLMs reward (brand mentions, structured data, reviews across multiple platforms, Reddit and Quora presence, content depth, Knowledge Graph binding) will compound over the next 24 months. The contractors who keep doing only what worked in 2022 will watch competitors pull ahead inside answers they cannot see.

This methodology is a starting point. The free scoring tool that uses it is in development. If you want to be on the list when it ships, or if you want a manual audit run against this framework now, book a free audit and we will walk you through where you stand and where the highest-leverage gaps are.